Why Programmable Assets Break in an AI-Native World

Behaviour > OwnershipMost programmable assets today are still designed as state containers.They can:• store data

• change state

• execute predefined logicBut they don’t evolve in a way that actually reflects the user over time.The problem isn’t programmabilityIt’s signal integrity.Once behaviour becomes:

• cheap to simulate

• easy to outsource

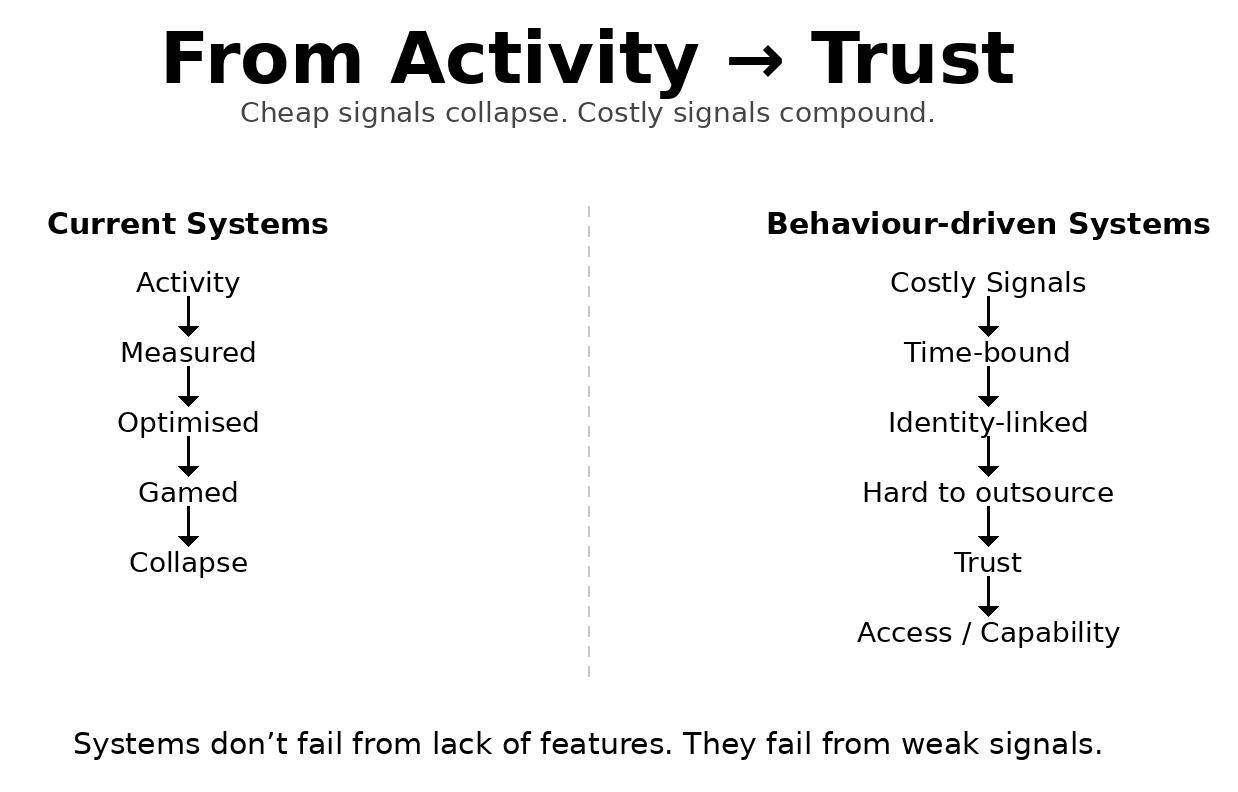

• or fully automated (AI, bots, farms)any system that relies on “activity” as a signal starts to degrade.This is just Goodhart’s Law at scale: when a measure becomes a target, it stops being useful.This creates a structural failure

If:

• actions unlock access

• access has valuethen those actions will be optimised.Not for truth.

For extraction.So the real question changesIt’s no longer: “what can assets do?”It becomes: what signals are expensive enough to trust?A viable system needs signals that are:1. costly to produce(time, effort, coordination, reputation)2. time-bound(can’t be precomputed or stockpiled)3. identity-linked(persistent across interactions)4. hard to outsource

(resistant to delegation or automation)This shifts the model entirelyFrom: assets as owned objectsTo: assets as evolving permission systemsWhere:

• access

• capabilities

• and progressionare functions of verified behaviour over timeThe architecture implicationYou don’t just need tokens or state transitions.You need:

• a rules engine tied to behavioural signals

• a signal weighting layer (not all actions equal)

• an identity layer linking wallets to longitudinal behaviourThe consequenceMost current “programmable asset” models will collapse under adversarial pressure.Not because they lack features

but because they lack trust-weighted signal design.The opportunityThe next generation of platforms won’t win by adding more logic.They’ll win by answering one question: What behaviour is real and how do you prove it over time?The systems that solve this won’t look like asset platforms. They’ll look like trust infrastructures.

Stephen James Burford

Thinking about behaviour-driven systems, identity, and trust in AI-native environments© Stephen James Burford. All right reserved.